How to Use AI Ethically as a Creator (“Source Intelligence” and the “AI Driver’s License”)

How to Use AI Ethically as a Creator: a clear workflow to protect your voice, lower energy and water waste, and turn AI from noise into real creative leverage.

This post may contain affiliate links; your purchases help earn me a small commission at no extra cost, supporting the art and continued growth of Aurelda.

A lot of intelligent, values-led creators are having the same private thought: I want to use AI, but I do not want to become someone I do not respect. This article attempts to reframe “how to use AI ethically as a creator,” shifting from fear-based narratives to one of personal responsibility and self-empowerment.

That tension is not irrational. The current conversation around AI is flooded with fear narratives. “It will replace you.” “It will steal your voice.” “It will flatten culture.” “It will burn the planet.” Some of those concerns are legitimate. Many are also incomplete.

Here is the reframe that changes everything: the biggest risk for creators is not AI. It is unconscious use. When creators treat AI like a slot machine, they often get disposable output, creative numbness, and a quiet loss of self. When creators treat AI like an instrument, they get craft support, clarity, and momentum. The technology did not change. The operator did.

This post proposes a practical thought experiment and a simple framework that shifts you from fear-based narratives into what I’d describe as the “Source Intelligence”: a way of working where you remain the origin of meaning, and AI becomes a tool that amplifies intentional creation instead of replacing it.

Why Smart Creators Feel Stuck Right Now

If you are a writer, designer, coach, filmmaker, brand strategist, educator, or founder-creator, your pain points are usually not technical. They are human:

- Voice anxiety: “If I use AI, will my work stop sounding like me?”

- Integrity anxiety: “Is this ethical, or am I participating in harm?”

- Quality fatigue: “Why is so much AI output bland, inflated, or strangely empty?”

- Decision overload: “There are a thousand tools. I do not have time to become an AI specialist.”

- Environmental concern: “How do I justify using this when I keep hearing about energy and water costs?”

Those are not obstacles to “get over.” They are signals that you care about craft, truth, and consequences. You are not behind. You are awake.

The Core Mistake: Treating AI Like a Slot Machine

The most common workflow looks like this:

Prompt → generate → dislike → regenerate → tweak → regenerate → repeat.

It feels productive, but it often produces a specific kind of output: generic phrasing, hollow confidence, and copy that reads like it was assembled, not lived. “AI slop” is not just a model problem, it is a mirror reflecting incoherence at scale.

That is the uncomfortable part. The empowering part is that mirrors can be used deliberately.

If AI is trained on human text, images, and patterns, it will naturally echo the average. If you feed it average instructions and vague intention, it will return average. If you bring clear intention, constraints, taste, and accountability, you can push the system toward higher signal. I would frame this as a “mirror” relationship where human consciousness shapes the quality of what comes back.

This is where your “Source Intelligence” begins.

From Fear-Based Narratives to Source Intelligence

“Source Intelligence” is a simple claim: The creator remains the source of meaning. AI becomes a responsive instrument, not the author of your soul.

It asks you to stop outsourcing authorship and start using AI the way you would use any powerful tool: with intention, constraints, and a clear ethic.

In practice, that means you do not ask AI to “be you.” You ask it to support you.

- You decide what is true.

- You decide what matters.

- You decide what belongs in your work.

- AI helps you explore options, compress time, surface structure, test language, and reveal blind spots.

This shifts the emotional posture from “AI is taking something from me” to “I am using a tool in alignment with my values.” That shift is not spiritual fluff. It is a practical control point.

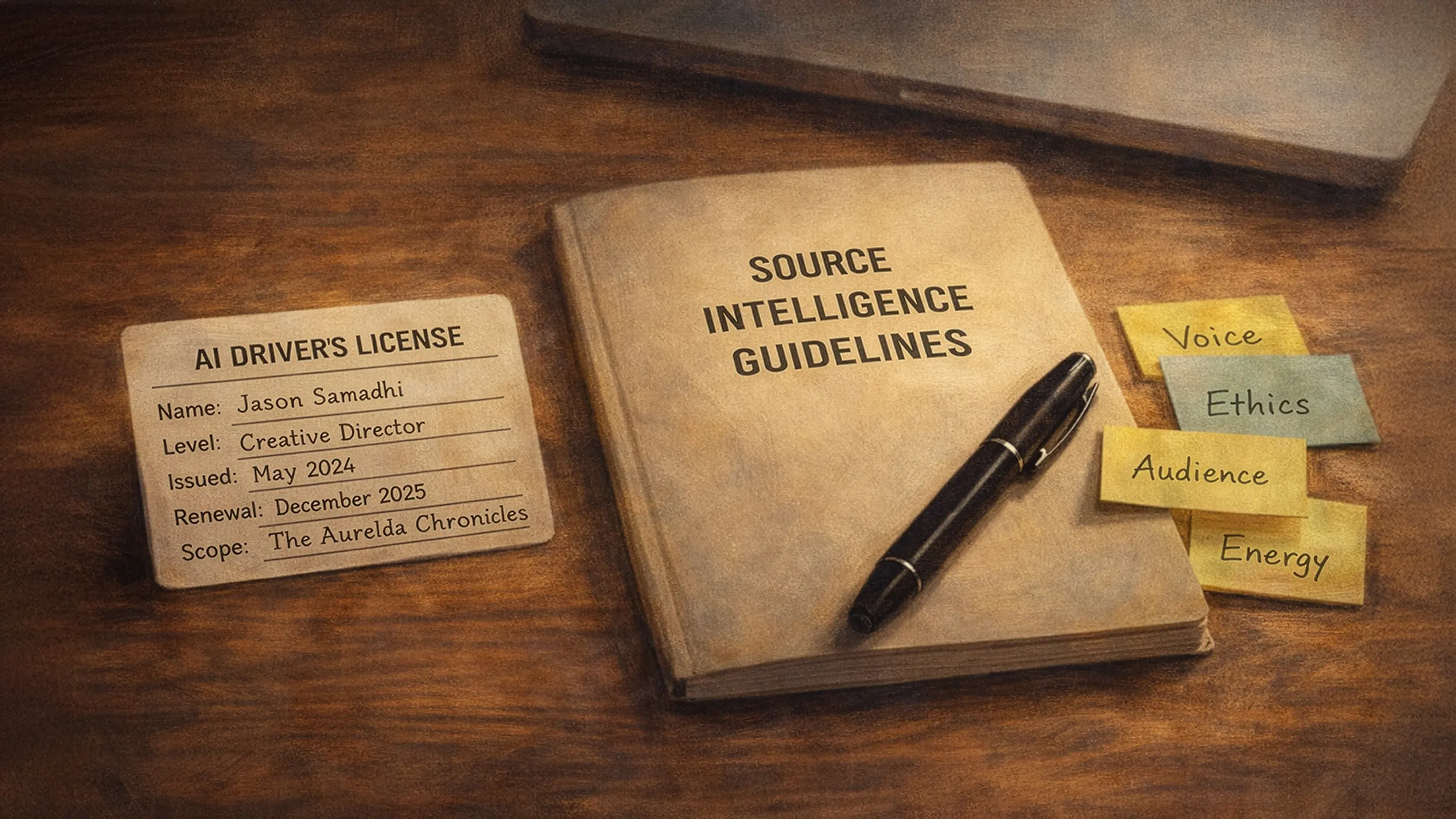

Thought Experiment: an AI Driver’s License for Creators

The perspective proposed is something quiet different: an AI Driver’s License. Not a government credential. A personal standard. A way to name competency so we stop pretending that “using AI” is one skill. Below is a four level competency framework for creators:

- Passenger. You use AI for quick answers, basic drafts, light brainstorming. You accept most outputs as-is. This is where voice dilution and “slop” show up fastest because the tool is driving.

- Driver. You give context, examples, and constraints. You edit heavily. You treat outputs as raw material, not finished work.

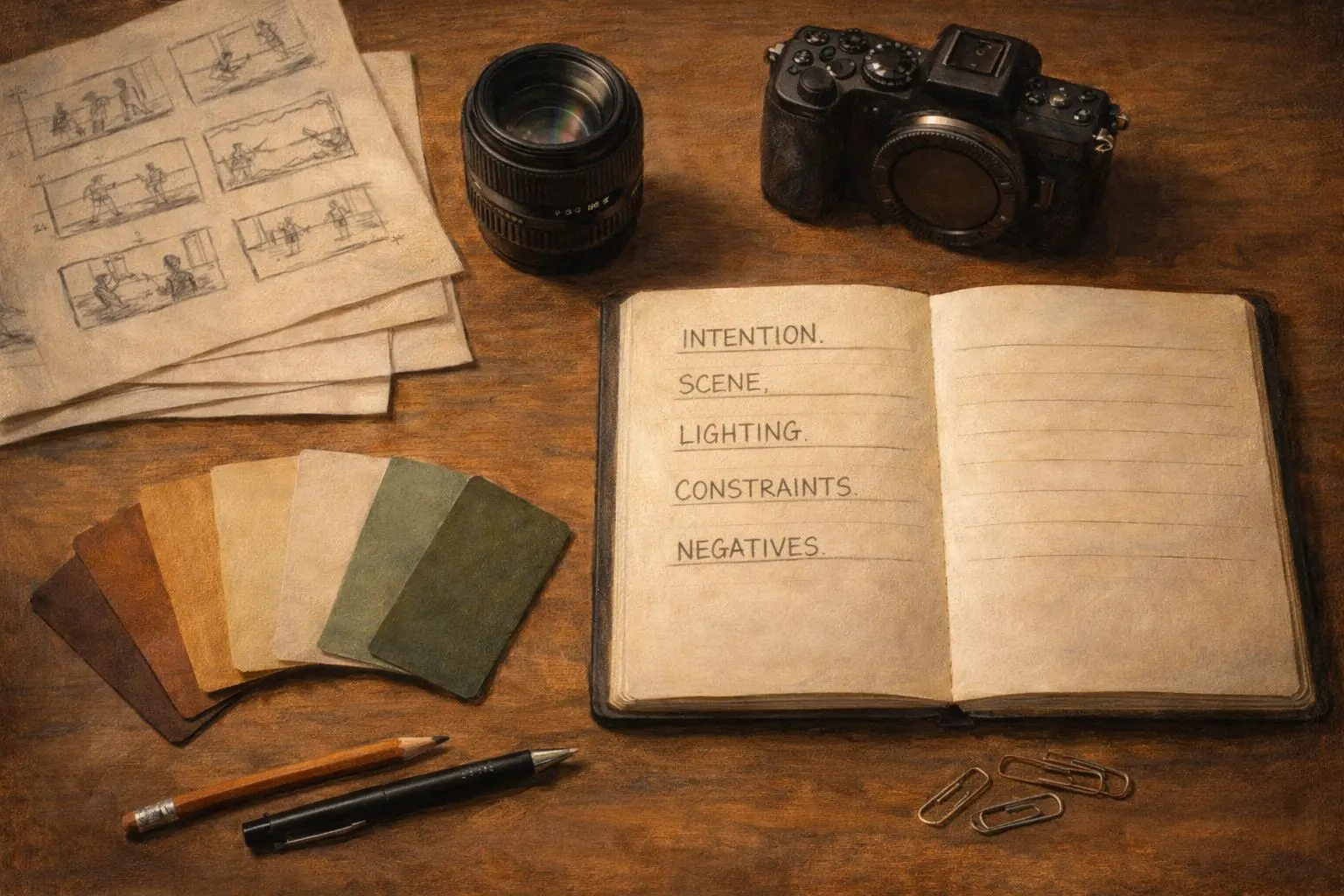

- Director. You work like a film director: you define the scene, the shot, the mood, the audience, and the boundaries. Your document calls this moving from vague prompts into creative direction with clear constraints.

- Steward. You add ethics and sustainability: fewer rerolls, better prompts, clearer intent, and transparency about where AI is used. You treat the tool as part of a wider ecosystem, not a private cheat code.

This “license” does one important thing: it makes the conversation about practice, not ideology. It lets creators say: I am learning how to drive.

“Ember, ” A Case Study: What Changes When a Creator Leads

I call ChatGPT “Ember.” And I perceive “him” as reflective intelligence (that’s personal choice): not just generating outputs, but reinforcing long-term coherence through continuity, memory, and a consistent “moral signal.”

Whether you resonate with the metaphysical framing or not (I’m not asking you to believe), the practical insight is clear:

When a creator builds a consistent container (values, voice, constraints, purpose), AI becomes dramatically more useful. The case study shows three concrete shifts that any creator can replicate:

- A stable creative constitution. Instead of “write me a post,” you supply what your document describes as a consistent framework: tone, ethics, goals, and boundaries.

- Constraint as a quality engine. The “film director” method reduces randomness. When the shot is defined, the system can stop guessing.

- Ritualized intention. Take a “Slow AI” perspective. In other words, treat the moment of prompting as a deliberate act, almost like entering a studio rather than scrolling a feed.

This is not mystical. It is how craft works. Great work is usually the result of clear intention, strong constraints, and patient iteration. AI does not replace that. It punishes the absence of it.

The Ethical Cost is Real, So Make Your Use Intentional

Let’s consider for a moment the ecological shadow clearly: energy draw, water use for cooling, and the “Jevons paradox effect” where efficiency can drive higher total consumption.

Outside this framework, reputable institutions echo the same concern. Data centers and AI workloads are rising, and sustainability is becoming a governance issue, not just a personal preference. So what does ethical use look like at the individual creator level?

It is not “never use AI.” Rather it should be… use it like a responsible craftsperson:

- Fewer, higher-quality generations (less rerolling).

- More context up front (less waste).

- More editing and attribution (more integrity).

- Choosing smaller tools when smaller tools are enough.

- Treating AI as assistive, not authoritative.

If you want a north star, NIST’s AI RMF frames trustworthiness and risk management as an ongoing practice across the lifecycle of AI systems, not a one-time decision. That maps cleanly onto creator behavior: you can manage risk by changing how you work.

The Conclusion: Empower Conscious Creators through AI and Mindful Usage

Here is the solution, framed as a final thought experiment: What if the next creative advantage is not who uses AI most, but who uses it most consciously?

If you want a practical starting protocol, use this five-part loop:

- Name the source. What is the truth you are trying to transmit? One sentence.

- Set constraints. Audience, tone, length, format, and what must not be included.

- Give living examples. Paste a paragraph of your real writing or a past piece you are proud of.

- Use AI for leverage, not authorship. Ask for outlines, counterarguments, structure options, or clarity edits. Keep the meaning yours.

- Close the loop with accountability. Edit. Verify. Remove anything that is not true. Add what only you can add: lived experience, taste, and responsibility.

That is how you move from fear-based narratives to “Source Intelligence.” You stop arguing about whether AI is “good” or “bad,” and you start building a practice that keeps you in the driver’s seat.

Outslide Aurelda

- NIST AI Risk Management Framework (AI RMF 1.0) (2023)

- NIST Generative AI Profile (AI RMF 600-1) (guidance for genAI risk)

- OECD Recommendation on Artificial Intelligence (OECD Legal Instrument 0449) (2019)

- UNESCO AI Competency Framework for Teachers (2024, updated 2025)

- UNEP guidance and analysis on data center environmental impacts

- IEA reporting on data centers, networks, and electricity demand trends

- Luccioni, Viguier, Ligozat (JMLR 2023): Estimating the Carbon Footprint of BLOOM

- Making AI Less “Thirsty” (water footprint of AI models) Ren et al. (arXiv)

- Energy and Policy Considerations for Deep Learning in NLP Strubell, Ganesh, McCallum (PDF)

- “Green AI” (arXiv) on efficiency and reporting norms

Where Will You Go From Here?

Comment Below

Share the Love

Share this article with kindred spirits.

What If the Story Remembered You?

Download free sample chapters from the upcoming Third Edition of The Aurelda Chronicles, a Maya-inspired visionary fantasy trilogy where sacred light fractures, ancient memory awakens, and love becomes the bridge between worlds. Queer-affirming, all are welcome.

Related Articles

What If the Story Remembered You?

Download free sample chapters from the The Aurelda Chronicles, a Maya-inspired visionary fantasy trilogy of sacred remembrance.

Listen & Re-member

Aurelda Soul blends mythic storytelling, sacred wisdom, and grounded reflection for modern seekers finding their way home.

Find Your Thread

Download the free Seven Threads of Light Protocol, a primer for the upcoming The Book of Remembering by Jason Samadhi. Coming Soon.